Difference between revisions of "Installation For 'Kafka developers' with Kafka, Registry, Connect, Landoop, Stream-Reactor, KCQL"

(→Create a topic) |

(→Source) |

||

| Line 204: | Line 204: | ||

=Source= | =Source= | ||

| + | http://rasimsen.com/index.php?title=Apache_Kafka | ||

| + | |||

https://hub.docker.com/r/landoop/fast-data-dev | https://hub.docker.com/r/landoop/fast-data-dev | ||

| + | |||

https://kafka.apache.org/quickstart | https://kafka.apache.org/quickstart | ||

Latest revision as of 20:42, 1 December 2018

Contents

Docker Installation

Docker Pull Command

docker pull landoop/fast-data-devGit : https://github.com/Landoop/fast-data-dev

$git clone https://github.com/Landoop/fast-data-dev.git

When you need:

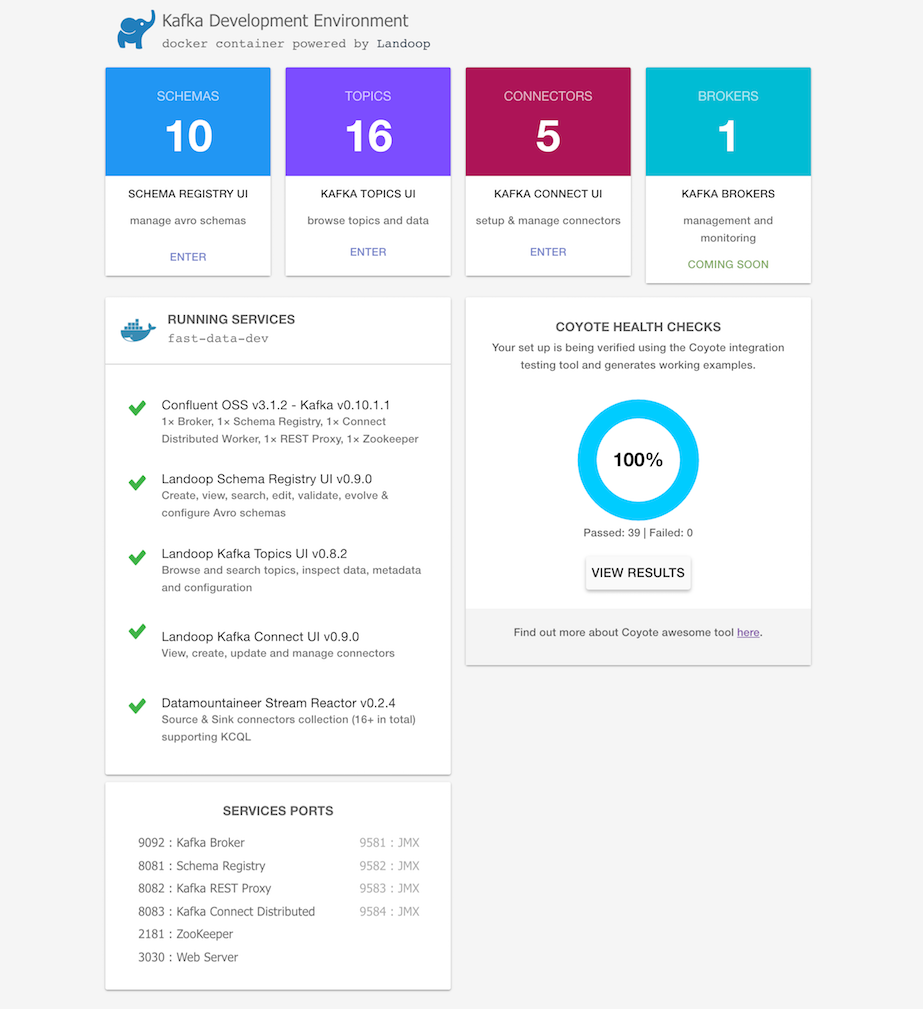

- A Kafka distribution with Apache Kafka, Kafka Connect, Zookeeper, Confluent Schema Registry and REST Proxy

- Landoop Lenses or kafka-topics-ui, schema-registry-ui, kafka-connect-ui

- Landoop Stream Reactor, 25+ Kafka Connectors to simplify ETL processes

- Integration testing and examples embedded into the docker

just run:

docker run --rm --net=host landoop/fast-data-devThat's it. Visit http://localhost:3030 to get into the fast-data-dev environment

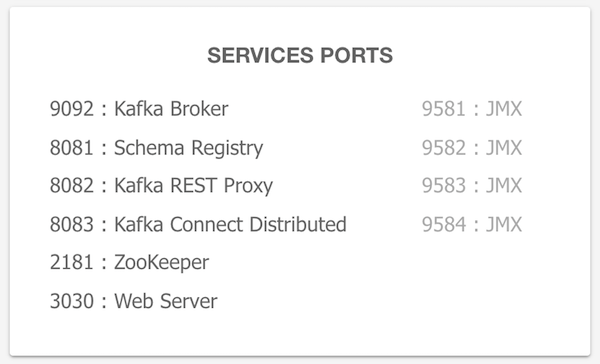

All the service ports are exposed, and can be used from localhost / or within your IntelliJ. The kafka broker is exposed by default at port 9092, zookeeper at port 2181, schema registry at 8081, connect at 8083. As an example, to access the JMX data of the broker run:

jconsole localhost:9581If you want to have the services remotely accessible, then you may need to pass in your machine's IP address or hostname that other machines can use to access it:

docker run --rm --net=host -e ADV_HOST=<IP> landoop/fast-data-dev

Mac and Windows users (docker-machine)

Create a VM with 4+GB RAM using Docker Machine:

docker-machine create --driver virtualbox --virtualbox-memory 4096 landoopRun docker-machine ls to verify that the Docker Machine is running correctly. The command's output should be similar to:

$ docker-machine lsConfigure your terminal to be able to use the new Docker Machine named landoop:

eval $(docker-machine env landoop)And run the Kafka Development Environment. Define ports, advertise the hostname and use extra parameters:

docker run --rm -p 2181:2181 -p 3030:3030 -p 8081-8083:8081-8083 \

-p 9581-9585:9581-9585 -p 9092:9092 -e ADV_HOST=localhost \

landoop/fast-data-dev:latestThat's it. Visit http://localhost:3030 to get into the fast-data-dev environment

Run on the Cloud

You may want to quickly run a Kafka instance in GCE or AWS and access it from your local computer. Fast-data-dev has you covered.

Start a VM in the respective cloud. You can use the OS of your choice, provided it has a docker package. CoreOS is a nice choice as you get docker out of the box.

Next you have to open the firewall, both for your machines but also for the VM itself. This is important!

Once the firewall is open try:

docker run -d --net=host -e ADV_HOST=[VM_EXTERNAL_IP] \

-e RUNNING_SAMPLEDATA=1 landoop/fast-data-devAlternatively just export the ports you need. E.g:

docker run -d -p 2181:2181 -p 3030:3030 -p 8081-8083:8081-8083 \

-p 9581-9585:9581-9585 -p 9092:9092 -e ADV_HOST=[VM_EXTERNAL_IP] \

-e RUNNING_SAMPLEDATA=1 landoop/fast-data-devEnjoy Kafka, Schema Registry, Connect, Landoop UIs and Stream Reactor.

Building it

Fast-data-dev/kafka-lenses-dev require a recent version of docker which supports multistage builds.

To build it just run:

docker build -t landoop/fast-data-dev .Periodically pull from docker hub to refresh your cache.

If you have an older version installed, try the single-stage build at the expense of the extra size:

docker build -t landoop/fast-data-dev -f Dockerfile-singlestage .Execute kafka command line tools

Do you need to execute kafka related console tools? Whilst your Kafka containers is running, try something like:

docker run --rm -it --net=host landoop/fast-data-dev kafka-topics --zookeeper localhost:2181 --listOr enter the container to use any tool as you like:

docker run --rm -it --net=host landoop/fast-data-dev bashView logs

You can view the logs from the web interface. If you prefer the command line, every application stores its logs under /var/log inside the container. If you have your container's ID, or name, you could do something like:

docker exec -it <ID> cat /var/log/broker.logKafka Test

Apache Kafka Download

Whilst you install Landoop, download Apache Kafka from https://kafka.apache.org/quickstart

We downloaded the 2.1.0 release and un-tar it.

$ tar -xzf kafka_2.11-2.1.0.tgz

$ cd kafka_2.11-2.1.0That's it. We are ready to test.

if your Landoop don't run, run it:

docker run --rm -p 2181:2181 -p 3030:3030 -p 8081-8083:8081-8083 \

-p 9581-9585:9581-9585 -p 9092:9092 -e ADV_HOST=localhost \

landoop/fast-data-dev:latestCreate a topic

Let's create a topic named "test" with a single partition and only one replica:

$ bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic testWe can now see that topic if we run the list topic command:

$ bin/kafka-topics.sh --list --zookeeper localhost:2181

testAlternatively, instead of manually creating topics you can also configure your brokers to auto-create topics when a non-existent topic is published to.

Send some messages

Kafka comes with a command line client that will take input from a file or from standard input and send it out as messages to the Kafka cluster. By default, each line will be sent as a separate message.

Run the producer and then type a few messages into the console to send to the server.

$ bin/kafka-console-producer.sh --broker-list localhost:9092 --topic test

This is a message

This is another messageStart a consumer

Kafka also has a command line consumer that will dump out messages to standard output.

$ bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic test --from-beginning

This is a message

This is another messageIf you have each of the above commands running in a different terminal then you should now be able to type messages into the producer terminal and see them appear in the consumer terminal.

All of the command line tools have additional options; running the command with no arguments will display usage information documenting them in more detail.

Source

http://rasimsen.com/index.php?title=Apache_Kafka